Living Instruments

Living Instruments is a collaborative project between Swiss musician Serge Vuille (under the umbrella of his project WeSpoke) and the DIY science crowd of the community laboratory Hackuarium.

It is a musical composition for a series of instruments that use bacteria, yeast and other living organisms to generate music. By interacting with the organisms and recording the sounds, gas bubbles, pressure, and movements they generate, the artists can transform data into music, creating a live, semi-improvised musical piece.

This page is work-in-progress. It is a living documentation of the project that gets regularly updated.

What

EN - This collaboration aims at three goals:

- To built a set of living instruments that use biological information or organisms as sources of signal.

- To compose a musical piece (creation) using these instrument and turning their output signal into sound.

- Place this project in the context of the current state of DIY, science and music.

FR - Cette collaboration a trois buts:

- Construire des instruments vivants (intégrant le vivant comme source de signal)

- Création d'une œuvre musicale (composition) en utilisant ces instruments

- Placer la démarche collaborative dans le contexte DIY, scientifique, et musical

Project Description (2000 characters)

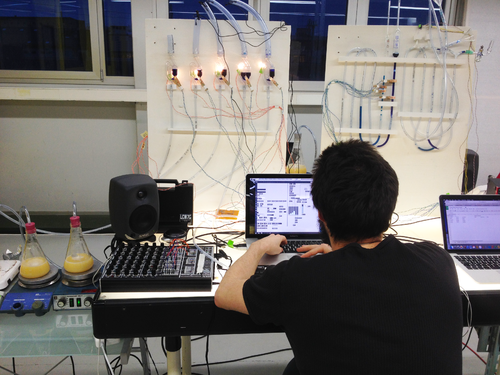

The ‘Living Instruments’ project is a live lecture/performance using micro- and other organisms to generate sound. It was created by the collective WeSpoke in the laboratory of the Hackuarium community in Lausanne, Switzerland, by a team of musicians, life scientists and (sound) designers. ‘Living Instruments’ reveals a hidden world, encourages interdisciplinary thinking and presents biology, technology and their relation to sounds as an otherworldly musical experience. The performers slip into the roles of laboratory scientists forming a hybrid setup where the interactions between humans and other living matter generates music through sensors, electronic devices and computers. By applying heat, movement, or algorithmic tracking, performers 'measure' living matter with counters, microscopes and antennas. These sensors are placed at several locations on the 'instruments' and the live recording of data is then turned into sound through synthesisers and music software. The nature of these instruments allows the performers to engage with them very interactively, however without complete control, and the cyclic behaviour of the living pieces creates rich grooves and rhythmic patterns. Enlarged video footage of fermentation, swarming microorganisms, human-moss interactions, traces of radioactive decay and other curiosities of nature are displayed on a large screen behind performers, highlighting the beauty of the dynamics between the scientific process and the musical outcome. the artists can transform data into music, creating a live, semi-improvised musical piece. We Spoke and Hackuarium have been invited to present ‘Living Instruments' in the Kammer Klang series at cafe OTO, London, as well as during the International Summer Course for New Music in Darmstadt, Germany. Various formats of project were also performed in Switzerland at the Kunsthalle Zürich, at Le Bourg in Lausanne, at the Dürrenmatt Centre in Neuchâtel and during the Klang Moor Schopfe festival in Gaïs.

Who is involved?

- Serge Vuille, musician (personal website)

- Vanesa Lorenzo, product & media designer (personal website)

- Luc Henry, biologist and science communicator

- Robert Torche, sound designer (personal website)

- Oliver Keller, electronics and sensors

- We Spoke musicians

When

The prototypes were presented in the contexte of the N/OD/E Festival "Laboratoire Meet & Geek" on 30.01.16 at Pôle Sud Lausanne.

The final deliverable was a concert/performance that took place on February 10th 2016, at the music and performance art venue Le Bourg, in Lausanne, Switzerland. More information about the event can be found here.

The concert was followed by a workshop/masterclass at the Lift conference on innovation and digital technologies in Geneva, Switzerland, on February 12th 2016. More information about the workshop can be found here.

In August 2016, the performance was part of WeSpoke "carte blanche" at the International Summer Course for New Music in Darmstadt, Germany. It was presented as a workshop on "Biochemistry and white noise" on Saturday August 13th at the Lichtenbergschule and performed entirely on Sunday August 14th at the venue Centralstation.

Past Concerts

- 10 February 2016: Opening Concert, Le Bourg, Lausanne, Switzerland

- 12 February 2016: Workshop/masterclass and performance, Lift conference, Geneva Switzerland

- 14 August 2016: Concert, Centralstation, Darmstadt, Germany

- 3 April 2017: OTO project days - Instruments exhibition and workshop

- 4 April 2017: Concert - Kammer Klang series, Cafe OTO, London, UK

- 20-21 May 2017: Interactive exhibition and performance during the Nuit des Musées - Centre Dürrenmatt, Neuchâtel, Switzerland

- 8 September 2017: Interactive exhibition and Concert - Klang Moor Schopfe Festival, Gaïs, Switzerland

- 31 January 2018: Concert - Le Bourg, Lausanne

- 21 October 2018: Concert and workshop - Kunsthalle Zürich in the context of the science festival 100 Ways of Thinking (organised by the Graduate Campus of the University of Zürich). More information about the concert is available here.

- 16 March 2019: Workshop and performance - Invited by the BCUL (Library of the University of Lausanne) for their Samedi des bibliothèques : Ramène ta science!

- 19 September 2020: Vanessa & Oliver took part in a larger jam session: Performance Other Planes of There: Speculative Listening Sessions Gessnerallee, Zürich

- 19 November 2020: Vanessa & Oliver performed "My Eyes Are Green" in a dedicated concert evening of the Other Planes of There program. Full recording on soundcloud. This improvised live piece was inspired by their work developed within Living Instruments.

What's Next

- 19 November 2020: Vanessa & Oliver as performance duo in Other Planes of There: Speculative Listening Sessions. Gessnerallee, Zürich.

Stay tuned! And if you want to help us bring Living Instruments to new audiences, please get in touch. In any case, we will update you on the upcoming residencies and events dedicated to bringing living instruments back to life.

Press

The Living Instruments project was in the media on several occasions.

Here is a selection:

Le Temps Vidéos- "Musique biologique: orgue à levures et mousse qui chante" by Aurélie Coulon (12 Feb 2016)

CLOT Magazine - "Serge Vuille & Luc Henry, artistic experimentation, music, and living systems" by Diana Cano Bordajandi (19 Oct 2017)

RTS radio program Magnétique - "WeSpoke et ses "Living Instruments" au N/O/D/E" (21 Jan 2018)

Description of the installation

Different combinations of the following instruments were used, from the world premiere performed at the music venue Le Bourg in Lausanne on February 12th 2016, until the latest performances. All of them have evolved from prototypes. The descriptions below are trying to describe the latest iteration accurately.

Bubble Organ

The concept

The Bubble Organ is an instrument powered by fermenting yeast cultures.

This video of the first minutes of the performance will give you an idea of the sounds produced by this instrument.

The setup

The following items are necessary for the construction of the Bubble Organ:

- 5x Erlenmeyer flasks (1x 10L, 1x 4L, 1x 3L, 1x 2L, 1x 1L)

- 5x rubber/silicon drilled cork for fermenter airlock (Top diameter/Bottom diameter:35/29 mm, 55/47 mm and 65/55 mm depending on Erlenmeyer flask)

- 5x S-shaped "Double Bubble" airlocks for beer and wine fermentation (transparent) fitted with 30mm plastic tubing (8x1.5mm) and various (19x2.5mm) on each side.

- 5x 1500mm plastic tubing (6x1.5mm)

- 10x White LED

- 5x Wooden Clothes Pegs

- 1x Arduino MEGA equipped with a USB host shield.

- 1x 12V power supply

- 1x 5m USB 2.0 A to B shielded cable (computer to Arduino)

- 1x 1m USB 2.0 A to B shielded cable (keyboard to Arduino)

- 1x ION Discover keyboard USB

The circuit

The controller for this instrument was based on a Arduino Mega equipped with an Arduino USB host shield.

The software

The complete Arduino code can be found here: Living-Instruments-Controller.

Tube Log

A description will be available soon

Moss Carpet

The concept

The moss carpet was designed and realised by Vanesa Lorenzo.

A moss - human interface conceived back in 2013, developed further as an interdisciplinary research about natural bioreporters research project on indicator species, such as moss and lichens, through which we can sense the environment and the climate changes. Mosses are tiny organisms, the first plants emerged from the ocean to conquer the land; unique and delicate native species with slow temporalities of growth which can decipher the secrets of life on Earth. They are pioneer species that can live in very harsh conditions, which can also provide important microhabitats for an extraordinary variety of organisms and plants. The interaction with mossphone has a ludic sound feedback that changes depending on the human that is in contact. When is touched, the human becomes, in a way, part of the same element changing its resistance (capacitive sensor) and this changes are data that is captured to be converted into sound. The result is the illusion that the moss is alive and reacts to our way of touching it, like if it were singing, snarling, murmuring or growling.

This video will give you an idea of the sounds produced by this instrument.

Electronic circuit with an active capacitive sensor or antenna: the organic interactive object invites to be touched and reacts to the arousal of physical contact emitting real-time sinusoidal sound feedback through serial communication with Pure Data or MaxMsp.

First prototype First Iteration, magic moss.

[1] Moss growing old, but wise with other living instruments.

The setup

Moss

1 receive antenna: Conductive thread or metal wires and 2,2 Mega Ohm resistance (x 2)

The circuit

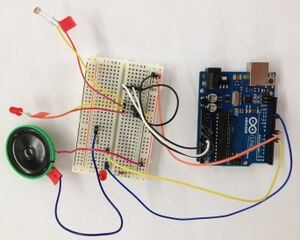

The controller for this instrument was based on a Arduino Uno. The full instructions are available here

The software

This software uses

/* * CapSense Library * Paul Badger 2008 * IMI version for 12 sensors and send to Max/MSP (use regexp to decode) */

Full software here

Sounds of Radioactivity

The concept

Natural radioactivity surrounds us everywhere on earth and is a truly random process. Certain radioactive elements decay into other elements by releasing energy in the form of elementary particles and other forms of ionizing radiation (alpha, beta, gamma radiation etc.). The timing and amount of released energy can be recorded by sensors that allow to turn this fundamental quantum physics process into rich sound and visuals. In the concerts, slightly radioactive everyday objects such as vintage uranium glass, potassium salt (KCl, a sodium-free alternative for table salt), old radium watches, and alpine stones from St. Gallen are used to trigger the detectors with sub-atomic particles. The radioactivity of environmental radon gas is demonstrated and collected during concerts with a simple electrostatically charged party balloon. Apart from those terrestrial sources of particles, muons from cosmic radiation are detected and sonified as well.

Hardware & Software

In his work at CERN, Oliver Keller designed different detectors for natural radioactivity and added sonification for this project.

iPadPix

The initial detector turning radioactivity into sounds, which was part of our concerts from the start, is based on the iPadPix prototype. It is a mobile device that visualizes radioactivity from a hybrid pixel detector by means of augmented reality on an iPad mini. Recorded data is the particle's arrival time, type, and energy. The particle data is sent via WiFi to the iPad for visualization in an open-source iOS app. At the same type, the data is sent in parallel to a computer running a receiver program written in Processing that triggers audio samples and Midi events. The Midi connection is in turn triggering a Korg Volca for percussive sounds and sometimes a larger modular synthesizer.

Sometimes we also explain the instruments in short solo demonstrations, this is the part on iPadPix from the very first opening concert in 2016 at Le Bourg, Lausanne. We kept explanations briefer or skipped them entirely in later performances depending on the framework of the evening.

DIY Particle Detetcor

By the end of 2019, another rather low-cost DIY particle detector based on cheap silicon photodiodes was added to this project with sonification. The `pulse-recorder.py` script in this repository uses the wonderful pyo sound synthesis library for generating experimental plucked string sounds based on the Karplus-Strong algorithm. The output is also fed into a modular synthesizer for triggering and generation of further sounds.

Virtual Soprano

The Virtual Soprano was used in the first two performances of the Living Instruments, but was abandoned in subsequent versions. A software detects your face expression through a computer's webcam and turns it into vocal sounds, like a virtual soprano. The complete documentation can be found here

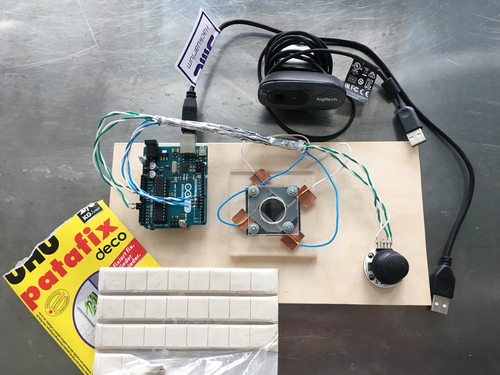

Paramecia Controller

The Paramecia Controller is an instrument inspired by a similar module described by Urs Gaudent from GaudiLabs (instruction video here).

After several prototypes, the SeePack microscope camera was replaced by a Logitech C270. The device was built according to previously described design (instruction video here) except the bottom acrylic glass plate was kept intact (with a piece of black paper underneath it, between the acrylic and the bottom wooden panel). A rubber joint was also added between the two acrylic glass plates (see picture below).

The first prototype, used in the first performances looked like that:

A second, more robust, version was made for the performance in Gaïs in September 2017 and used for the following performances:

The circuit

The circuit is composed of an Arduino UNO, 2 x 2 pairs of cables (1 pair for the joystick and 1 pair for the electrodes). The joystick was the Play-Zone Arduino PS2.

The software

The complete Arduino code can be found here.

Paramecia Bolero

The Paramecia Bolero is based on a mini-microscope

The software

The MaxMSP software patch used is available here.

Video

The Mixer

Roland HD Video Switcher V-1HD

Log

Preparation before "100 Ways of Thinking"

When?

Friday 7th September-Sunday 9th September 2018

Who was there?

- Luc Henry

- Oliver Keller

- Robert Torche

Final days before first performance

When?

Every day 1st-8th February 2016

10am - 8pm (or midnight.. depending on the day)

Who was there?

- Serge Vuille

- Vanesa Lorenzo

- Luc Henry

- Alain Vuille

- Oliver Keller

- Robert Torche

- We Spoke musicians (7-8th Feb)

N/O/D/E Festival

What?

Several prototypes of the Living Instruments project were featured at the N/O/D/E digital culture festival.

The 2016 edition was about Algoritmes and Big Data and hosted a theremin annual meet up.

The festival is organised By Association Longueur d’Ondes (coordination & programmation: Coralie Ehinger & Julie Henoch).

Where?

The Meet & Geek Laboratory

2nd floor at "Pole Sud" Cultural Center in Lausanne.

When?

30.01.2016 from 9am to 6pm

Who was there?

- Vanesa Lorenzo

- Luc Henry

- Alain Vuille

Construction weeks 25-29.01.2016

When?

Every day 25th-29th January 10am - 6pm (or midnight.. depending on the day)

Connecting FaceOSC to MaxMSP to tryout sound patterns.

Who was there?

What?

Blubblob Pond

Blob with sea water organisms from a local pet store in a crystal ball, with camera, laser cut structure on transparent polyvinyl and metal screws and nuts.

Connected to MaxMSP.

Facetracker Tree

Tryouts with soundpatterns.

Construction weeks 18-25.01.2016

When?

Every day 11-16th and 18th-25th January 9am - 5pm (or midnight.. depending on the day)

Who was there?

- Serge Vuille

- Vanesa Lorenzo

- Luc Henry

- Alain Vuille

- Gilda Vonlanthen

- Oliver Keller

Building the modules

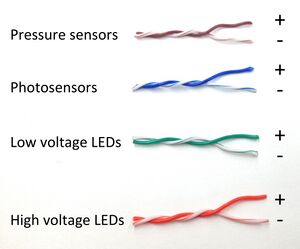

Wiring:

The whole system was wired using twisted-pair cables from Ethernet cables.

To avoid confusion, the 4 types of components were wired using a colour code (see picture below).

Fermentation cultures:

We used pure brewing yeast cultures growing in YPD medium and feeding on glucose to produce CO2 gas:

- Starting from 2mL dense culture (from overnight growth from plate) in 250 mL fresh medium.

- The next day, the dense culture were complemented with 50 mL of 40% glucose solution (not sterile) -> 8% final glucose concentration.

- The cultures started producing CO2 after 30 min (2 mL CO2 per minute)

- The cultures could be used after 90 minutes (5 mL CO2 per minute)

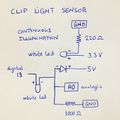

Bubble sensors:

Bubble sensors we built using a white LED and a photodiode. The setup was inserted in a wooden clothespin.

An option for the calibration of the sensors was explored based on a tutorial proposed by Arduino.

Microphones:

Microphone/Headphone Amplifier Stereo DIY kit (MK136, Velleman) were purchased from Distrelec and build according to instructions.

Paramecia tracking device:

SeePack microscope camera (see picture below) was mounted on our Carl Zeiss Axiolab E re microscope.

The EasyCapViewer 0.6.2 software was used as a player to record live images.

Mossphone:

Hunting the moss, building the platform and wiring it to Arduino.

Program in IDE Arduino.

HOW TO PROGRAM/WIRE TO ARDUINO AND COMMUNICATE WITH MAXMSP soon on Github.

FacetrackerTree:

A device that tracks human expression and a code to translate it to sound patterns.

Code on OpenFrameWorks here FaceOSC [2]

HOW TO COMMUNICATE WITH MAXMSP soon on Github.

Prototyping Week-end 19-21.09.2015

When?

Saturday 19th September 11am - 7pm

Sunday 20th September 10am - 8pm

Monday 21st September 10am - 6pm

Who was there?

- Serge Vuille

- Vanesa Lorenzo

- Luc Henry

- Michael Pereira

Brainstorming Session

We spent those three days brainstorming at UniverCité, trying to design 'instruments' that we could play, based on fermenting yeast cultures.

The 'instruments' we envisage would be driven by living organisms that produce work we can turn into sound. The most obvious example is fermentation (microorganisms eating glucose and rejecting alcohol and CO2) producing a gas that one can use to make bubbles, sounds, etc.

These instruments can fall into 3 categories:

LIVE - AUTONOMOUS AND COMPUTER INTERACTION No need for a human being to work in order to produce a sound from the living organisms activity.

- Gas producing fermentation broth (Bacteria and yeast) and gas-machine interactions

- Insects (moths, fruit flies) movement recognition software

LIVE - HUMAN INTERACTION A human subject will read a score written by living organisms and play an 'instrument' according to this score

- Keyboard and microscopic score -> observe microorganisms in water from local pond -> organisms move in microscope field on "score" slide

RECORDED - COMPUTER INTERACTION A recorded signal from an organism is used as a score and played either directly by a computer or by a human subject.

- Using for example genomic data (from the organisms used in other 'instruments') to generate sound. This could be simple (ATGC into tunes) or complex (use of amino acids triplex).

Building the first prototype

Together with Serge Vuille, our musician in residence, we designed and built a series of living instrument prototypes.

(Thanks biodesign.cc for the soldering iron!)

Overview of the prototype:

Before we go into too much details, you can check the video!

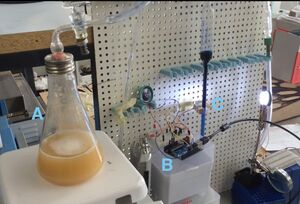

Now a bit more details. Below you find a picture of the setting, the recipe to produce CO2 from yeast fermentation and a scheme of the bubble detector device.

Tubing set-up:

A. is the 250mL yeast culture producing the CO2.

B. is the electronic circuit that allows our Arduino UNO board to make a variable sound when gas bubbles are going through the glass tube filled with ink-coloured water.

C. is simply a photodiode that will sense white light (from white LEDs) when the bubbles pass by.

Fermentation cultures:

We used two different types of cultures to produce CO2 gas:

As a test, we used random culture from rotting fruits juice.

- Blackberries were squashed into a juice and diluted with 1/5 water (40 mL into 160 mL) and the mixture stirred at room temperature. There was an immediate production of CO2 gas.

To get something more reproducible, we used pure brewing yeast cultures feeding on glucose.

- The composition of the overnight medium was as follow: 2.5g YE, 5g Peptone, 5g glucose